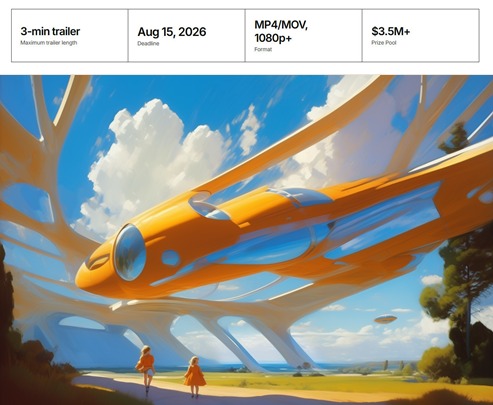

The Future Vision XPrize is now live, a $3.5 million film-making contest. Submit a three minute trailer for a film that tells a story about an optimistic future. Specifically, show how good things will become real in a technologically-advanced future that is happening because of us (not being done ‘to us’). Animation and AI tools can be used. Deadline: 15th August 2026.

Category Archives: The Animation Industry

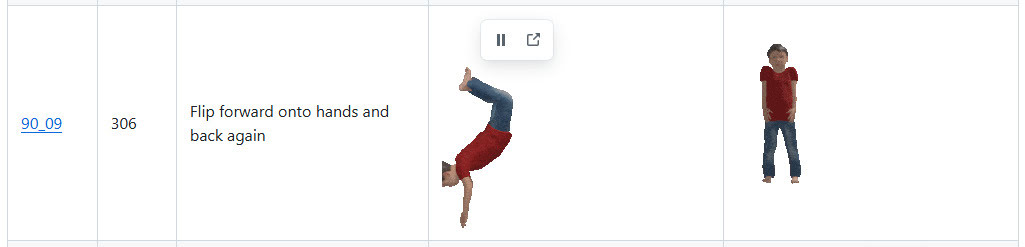

2,600 Carnegie Mellon .BVH files for Poser, as animated .GIF previews

I see that Shriinivas has an animated directory for the 2,600 Carnegie Mellon .BVH motion capture files. Released November 2023, you get little munchkins in .GIF animations which show each motion-capture.

The .BVH files are linked with a named link alongside each animation, but note that these don’t lead to the Poser versions. For Poser you want the cmu-ecstasy-motion-bvh-poser-friendly-2012 freebie archive. But the names in each set are the same. Thus Shriinivas’s “90_09” means that in the Poser files you need to look in folder “90” and there find the file “90_09.bvh”.

Scroll down his page to see the link to sub-pages for named ‘Animation Categories’, e.g.

Instructions for loading .BVH to a Poser figure, here. Sadly there’s still no drag-and-drop of .BVH in Poser.

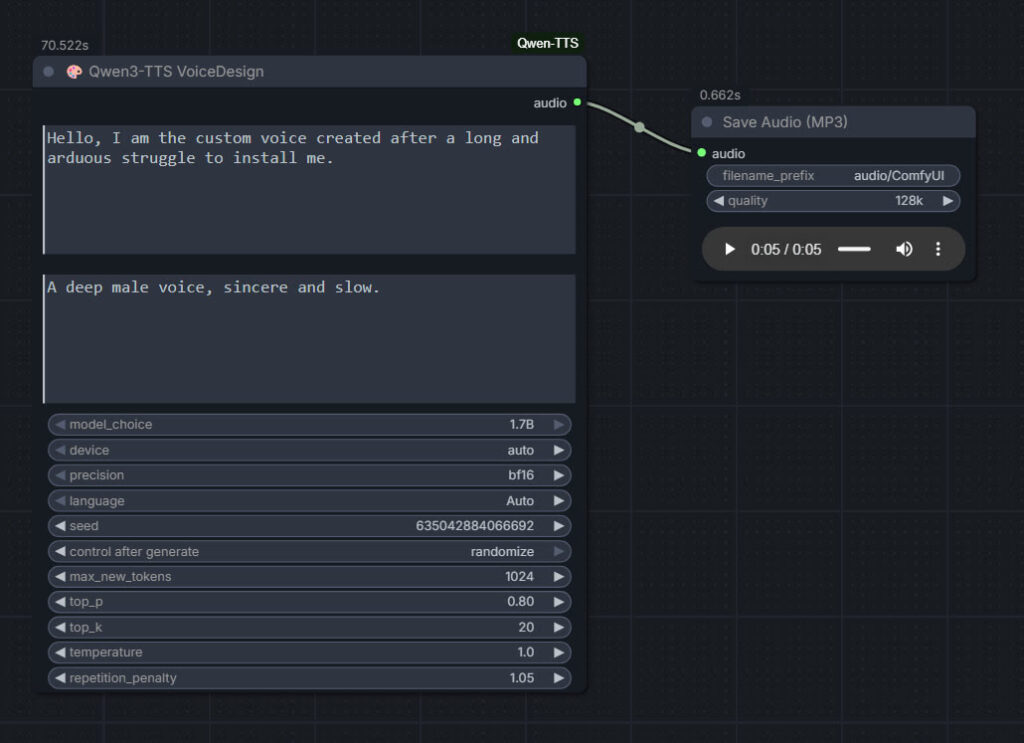

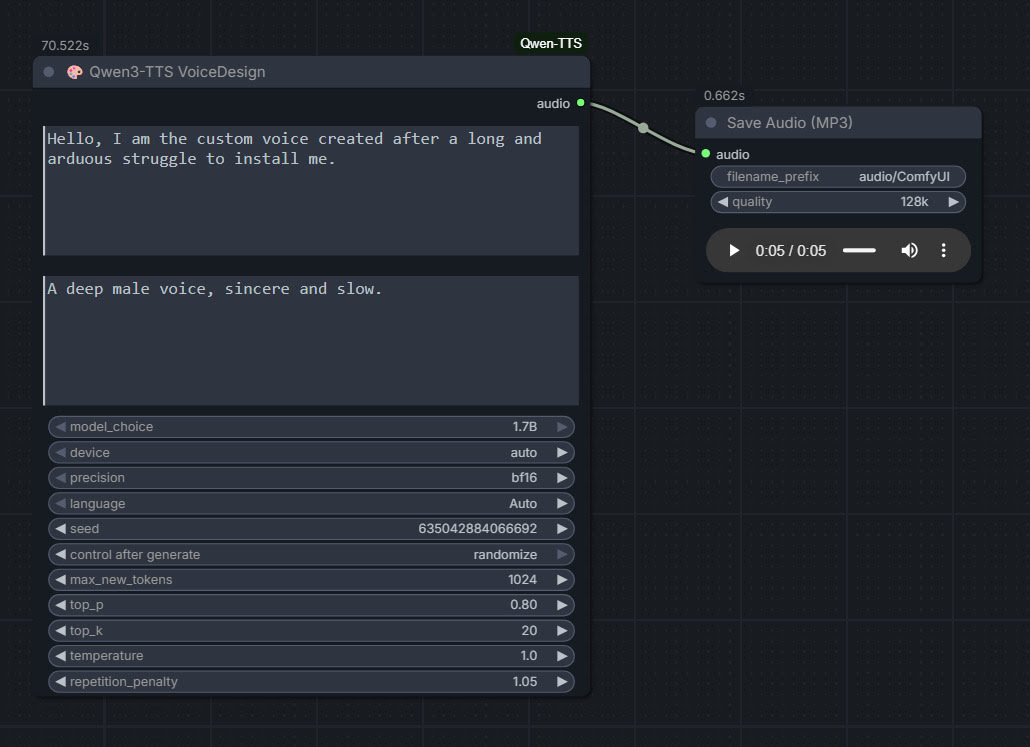

Qwen3 TTS – install and test in ComfyUI

Qwen3 TSS has been released, and it allows local ‘prompt to custom character’ voices. This adds a whole new dimension to local text-to-speech (TTS). It’s also a pleasingly small model at around 5Gb total (if you already have many TTS Python requirements), so is very feasible for those with older graphics cards and slower Internet connections. It has an Apache 2.0 license, so is fully open-source and available for commercial use. All the below requirements are free, as is the way with local AI.

As you can see, you can describe your exact voice and the audio generated conforms to the description. Voices can be described with great detail, far more than shown above, and their modulation over time also (e.g. “rising excitement”). There are obvious uses here for unusual character voices for animation, games, audio drama, vocal additions to audio soundscapes, etc.

Tested and working, after a lot of work. Here’s how to manually install for ComfyUI portable:

1. In ..\ComfyUI\models\ create the new local folders ..\ComfyUI\models\qwen-tts\Qwen3-TTS-12Hz-1.7B-VoiceDesign\ and its subfolder ..\speech_tokenizer\

2. Download the required models Hugging Space at Qwen3-TTS-12Hz-1.7B-VoiceDesign and speech_tokenizer.

Put the downloaded files into their locally pre-prepared folder and sub-folder.

3. Now get FlybirdXX’s ComfyUI-Qwen-TTS custom nodes to run these models. Windows Start button, CMD, cd into the ComfyUI custom nodes directory, then…

git clone https://github.com/flybirdxx/ComfyUI-Qwen-TTS

4. Install the requirements for the new custom nodes. Start, CMD, cd to the ComfyUI embedded Python directory, then…

C:\ComfyUI_portable\python_standalone\python.exe -s -m pip install -r C:\ComfyUI_portable\ComfyUI\custom_nodes\ComfyUI-Qwen-TTS\requirements.txt

(Replace ComfyUI_portable with whatever your local path is).

There should be no conflicts, as yesterday’s patch for these custom nodes fixed the official Qwen TTS demanding transformers==4.57.3 which could have killed Nunchaku (which requires a lower version).

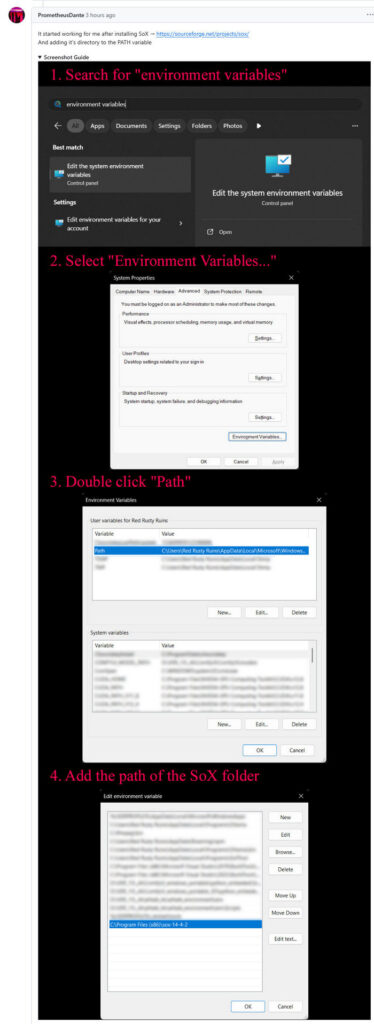

5. These Custom Nodes require a download of SoX which is an .EXE installer. Sox is a venerable freeware sound-exchange code library, kind of like ImageMagik… but for sound. After install you must add it to your Windows PATH. Thanks to Promethean Dante for the fix here…

Looking at the node code it seems SOX is only needed if you try to generate on CPU rather than GPU, but the lack of it prevents the nodes from loading in ComfyUI. It seems you need both the Python sox module installed (it installed along with the requirements.txt – see above), and its Windows framework via the .EXE installer.

6. Start ComfyUI, and set up a simple workflow thus with the new nodes…

Time: 70 seconds for a five second clip, on a 3060 12Gb card. Reasonable, not super-turbo but workable.

The basic requirements of Qwen3 TTS are compatible with a ComfyUI portable install — Python 3.8 or higher, PyTorch 2.0 or higher, so the above custom node set won’t bjork your PyTorch by trying to upgrade it. Beware others similar custom nodes for Qwen3 TTS in ComfyUI that will try to upgrade Pytorch to 2.9 (not good, for a portable Comfy).

Contest: Internet Archive’s Public Domain Film Contest 2026

The copyright release season approaches. The Internet Archive is running a contest for creative short films that use public domain material, especially the releases due on 1st January 2026.

Make a 2-3 minute short film, and add an equally open soundtrack. No ban on the use of AI, which is especially notable since good-quality local AI Video2Video generation has become accessible/affordable in the last year. But I’d image that the original footage is what the judges will be looking out for. The 1930 date suggests obvious linkages with early pulp science-fiction. Deadline: 7th January 2026.

Here’s a survey of what’s entering the public domain in 2026, with a focus on fantasy, science-fiction and horror.

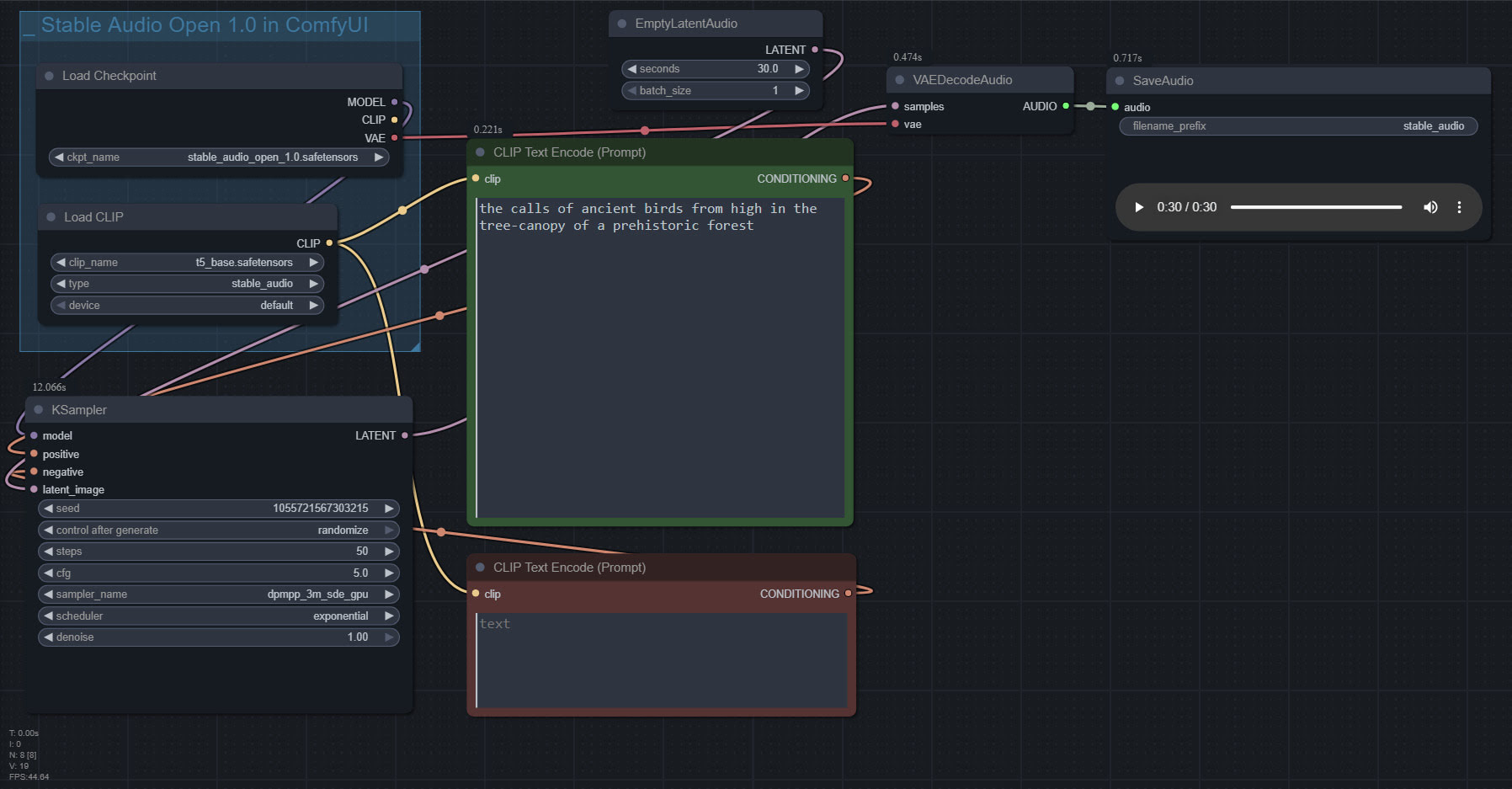

Stable Audio 1.0 in ComfyUI

Stable Audio 1.0 is a free “prompt to sound-fx” generator, based on an ingestion of the well-tagged sound FX files at the huge public-domain Freesound website. As such it produces royalty-free sound FX clips, of up to 47 seconds in length. Here it is, working in ComfyUI portable.

Workflow:

1. Copy the 4.7Gb model.safetensors from the Archive.org standalone’s ..\Stable-audio-open-1.0-webui-portable-win\stable-audio-tools\models folder. The standalone’s .torrent file is the easiest and most hassle-free / re-startable way to get this huge file. Then you put it in ../ComfyUI/models/checkpoints and after that you rename it as stable_audio_open_1.0.safetensors

2. Download the 800Mb Google T5 encoder model.safetensors file from HuggingFace, rename it t5_base.safetensors and copy that into ../ComfyUI/models/text_encoders/

That’s all you need. Just set up the workflow as seen above, and you’re ready to generate. All the other guff in the Archive.org Stable Audio portable is now taken care of by ComfyUI. ComfyUI audio generation also feels faster than the standalone.

In the above workflow, setting “batch” higher than “1” seems not to work.

I found it is possible to multitrack/mix more than one FX, via the prompt rather than nodes…

A balanced mix between a good field recording of a man walking through dry leaves in winter, and a recording of small birds calling plaintively in the surrounding Canadian boreal forest.

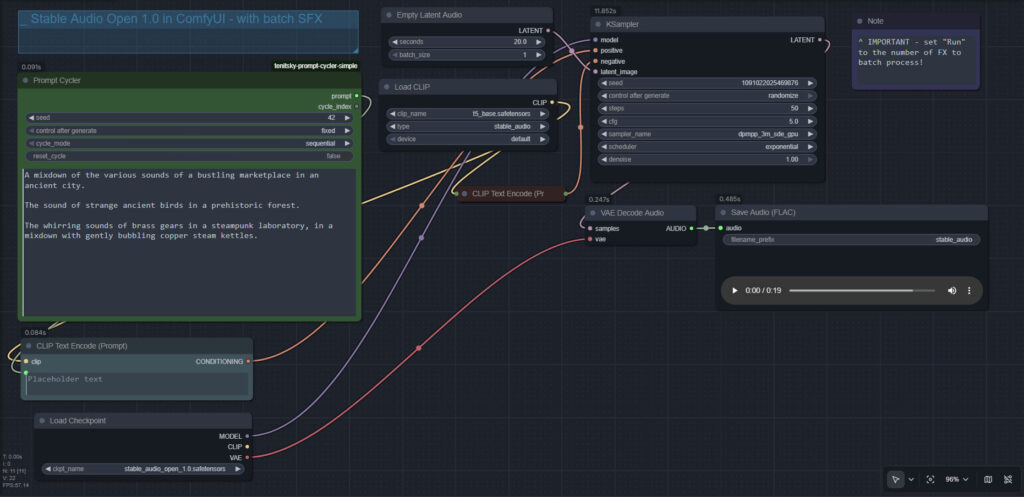

Update: This simple batch workflow works for batching different prompts and/or getting multiples ‘takes’ of the same prompt…

Local Microsoft Azure AI voices

The original Msty (1.9.2, not the flaky new Studio version) is a fine free desktop host for running local offline LLMs (‘AIs’). But it has no offline text-to-speech. Your AIs can’t talk, unless you get an API key and are always online.

One offline solution is the freeware Simple TTS Reader 2.0, which reads whatever gets sent to the Windows clipboard. Very very simple, it just does the job — anything copied to the clipboard gets read aloud. Great, but sadly it can only use Microsoft Speech voices, which are rather robotic and limited. It appears Microsoft has moved on from these, to its new and far better Azure TTS voices.

However, there’s a hack to get these Azure voices locally. There’s a handy NaturalVoiceSAPIAdapter from GitHub, with a straightforward Windows installer. This freeware makes Microsoft’s Azure natural (aka ‘neural’) TTS voices accessible locally to some of the SAPI5 compatible TTS desktop software packages. On Windows 11, these more advanced voices are otherwise locked to the Windows Narrator for local use, and no other software can use them (booo…). But now Simple TTS Reader can use them too.

As well as NaturalVoiceSAPIAdapter you also need to get your target Azure voice from this selection of free voice downloads. Unzip it as directed and put it somewhere sensible. When you install NaturalVoiceSAPIAdapter, you need to tell the software where the voice is on your PC, e.g. C:\TTSVoices\ Remember: Un-zip, don’t install in the usual installer manner.

After that, restart Simple TTS Reader 2.0 and you have a good local Azure TTS voice for automatically reading whatever is sent to the clipboard. Now when you hit ‘Copy to Clipboard’ at the end of a Msty LLM response, the text will be read in a reasonably good AI voice.

Regrettably Msty can’t automatically ‘Copy to Clipboard’ at the end of each LLM response. It has to be done manually, by clicking the icon. Ideally, Msty would add a “copy each new sentence to the clipboard, on completion” option.

Having Azure voices locally on your PC may also interest animators who’d like to have such quality voices without going online. Of course, there are also dedicated TTS AIs now… but they can be very fiddly to set up, require many Gbs of downloads and disk space, and also a good graphics card to run them. The above fast Windows 11 solution requires a mere 80Mb in total and no graphics card.

The Balabolka TTS freeware can also see the Azure local voices, but note that it’s 32-bit software and thus you will need to install both 32-bit and 64-bit versions of NaturalVoiceSAPIAdapter.

iClone goes full AI, with robust ComfyUI integration

Reallusion’s iClone embraces AI image rendering, with a new official ComfyUI integration. It looks like they’re going all-in for AI, regardless of the AI doom-moaners. Good for them.

We’re excited to launch the Open Beta of AI Render plugin, a powerful and completely free tool that bridges real-time 3D animation with AI-powered rendering, seamlessly integrated into the ComfyUI workflow. … AI Render uses custom nodes that connect Reallusion’s 3D environments directly into the ComfyUI ecosystem.

Now in Open Beta. Officially supports only Stable Diffusion 1.5 and WAN 1.3b video, though node-wranglers may find information about other types of AI image-gen on the Reallusion forums. Likely the new Wan 2.2 relatively lightweight 5B model will be of most interest there.

Seems to have a standard approach. The Comfy nodes appear to take Depth, Pose, Normals and Edge preprocessor data simultaneously from iClone’s real-time viewport, also marrying them with one-click style presets (it’s an IP-adapter) for refinement of output. The two comics presets are manga style, so there’s no western comics style as yet.

Interestingly also… “AI Video Generation models optimized for consistent frame-by-frame results”

How consistent? That would be the worry there. Still images are one thing but, even with four Controlnets working at once, are we still going to get slightly “wobbly” faces? One would also have to worry about shifting colours.

Microsoft’s New Ray Tracing AI – now in ComfyUI

Life moves fast in AI-land. Last month I blogged here about Microsoft’s New Ray Tracing AI. This month — courtesy of Paul Hansen of Germany — Microsoft’s new tech is now free in ComfyUI. Along with an outstanding install guide and documentation. All free. Currently, 2 seconds of finished raytraced animation takes 22 seconds on a 4060 card. Import of .FBX is coming soon.

Microsoft’s New Ray Tracing AI, now at 16 fps

Microsoft’s New Ray Tracing AI (YouTube Video, six minutes with hardcoded ad at the end). They ingested 16 million ray traced 3D images, to make an AI that simply infers (from its past knowledge) what the play of real light in a 3D scene should be. Then the AI applies it and ‘renders’ the scene in a microsecond. You can tweak materials, and it updates instantly.

Animated? Yup, their pseudo-raytracing currently clocks in at 16 frames per second (on MS’s research labs hardware, admittedly). Quite respectable, and there are also frame-interpolation AIs out there that might boost it to 30FPS.

Physics? Yup, they even added that too. Even dynamic water.

Generative AI overlay? Not yet. But if this gets a general open-source release and isn’t locked away as an exclusive for Microsoft Flight Simulator, someone will add generative AI imaging to the mix. Imagine not only AI raytracing, but AI raytracing + a layer of SD ‘style change’ based off the 3D scene (but still faithful to it). The ‘Hollywood-real look’ for your 3D scene, in near real-time and beautifully lit.

What a time to be alive. Indeed, what a time to have a huge Poser runtime. Poser has such massive possibilities ahead, if only it can shrug off the AI-haters. The devs don’t even have to develop for it, I would imagine. Just open up some general hooks in Python, to let users hook into whatever local AI they choose to run.

Release: IK Studio for After Effects

New to me, Richard Rosenman’s $50 IK Studio – Inverse kinematics plugin for After Effects (February 2025). Apply IK to the joints of your 2D cutout cartoon characters. He also has a survey of The Best Rigging Tools for After Effects for 2D characters and props. Probably far easier to do such animation in Moho or Cartoon Animator. But if you have to use After Effects, this appears to fill a gap.

Release: Pencil Pro, for Blender

I’m all for natural media emulation from 3D scenes. So I was pleased to see the new $15 Pencil Pro add-on for Blender, allowing your 3D scene to emulate a pencil drawing. The result is obviously not great at long distance (e.g. a city scene), where it looks like a point-cloud with the points mapped to tiny graphite strokes. Better for medium and close animation shots, though there’s still a distinct Rhubarb & Custard-style wobble. Which is charmingly old-school in its way, and would be acceptable to young kids watching shorts.

Suitably rough and sketchy and believable, if you overlook the polygonal angles from the 3D. No per-frame autocolour, but something that auto-colours greyscale (e.g. Akvis Coloriage AI) might give colourisation that is not too wobbly, when run frame by frame.

Release: Framepack

Relatively easy ‘video diffusion’ AI is here, and working on relatively low-end PCs. Framepack is fully open source and can be run locally. Feed it a source image and a simple instructional prompt, and generate up to a minute of video at 30FPS. With reasonable speed, and without needing a stupid amount of VRAM. Impressive. It’s from lllyasviel, the guy who made the SD Controlnets, Fooocus and WebUI Forge.

Animated PNG demo, 4Mb.

There is now a one-click Windows installer for Windows 10 (though I guess it might be hacked to use CUDA 11.x + an earlier compatible Pytorch, for Windows 7 users). Note that, once installed, it then fetches 40+Gb of models and controlnets etc. (Models are here for separate download). So you’re likely to need 50Gb+ of space for a local install. There’s no standalone .torrent at present.

It can do widescreen as well as square and phone-screen format. Appears to be limited to about 640px in generation size, so upscaling would be needed. No pristine 4k footage, then. Since it works from any pre-made image, the potential for animating Poser and DAZ renders seems obvious. No whining about ‘piracy’ either, that way.

And the fact that it’s free and can speedily generate somewhat lengthy clips means it has potential for generating lots of possible clips and then stitching a YouTube movie together from the best (though of course there’s no lip-sync).

Due in May, your $10,000 AI virtual movie-studio?

Available in May 2025, the new Digits off-the-shelf AI box direct from NVIDIA, starting at $3,000.

Inside will be a special AI-tuned Linux system, NVIDIA’s special GB10 processor and 128GB of memory, and it should easily run local AI models of “up to 200B” in size. 70B would usually be the maximum for a local PC, so this is effectively a doubling of that capability, plus some overhead left over to run other software. Such as audio voice recognition/synthesis, potentially meaning that models such as an advanced human-ish roleplaying LLM could have real-time fluid voice conversations with a human.

The box can run as a standalone, or be plugged into a normal PC. Two can be placed side-by-side to make an ‘AI super-processor’ capable of running the largest and (arguably) the most powerful AIs. Two boxes should come in at under $10,000, thus superpowered AI becomes affordable for many small businesses. Provided they can afford the electricity — I’ve heard nothing about power consumption as yet.

It would be very interesting to see what creative hackers and tweakers can do with a $10,000 setup and the ability to run multiple AIs and other software, and have the AIs control the software (e.g. Poser, which ‘talks Python’ just as the AIs do) and talk to each other. A complete virtual movie-studio, with voice-actors, in your garage?

Flow (2024)

YouTube playlist for the award-winning made-with-Blender animated movie Flow (2024). Includes three interviews with the maker, and two long “making of” videos.

Wonder Animation

Autodesk Unveils Wonder Animation. Interesting…

* Film your sequence as live-action…

* … then an AI translates what is sees to 3D polygons and rigs (3D scene, figures, mo-cap, cameras, camera movements)

* … then you can render the 3D consistently and quickly as a toon in 3D (Maya, Blender, Unreal game engine).

The drawback appears to be that it’s an online cloud service. Not local desktop software. Still, I like the idea.