Stable Audio 3 has been released. Free, offline and local. Feed it a text prompt and it generates field-recordings, movie-style sound-effects, mixed-effect soundscapes… and now also music. It’s of obvious use for those creating animations, visual novels, motion comics, YouTube illustrated audiobooks, Ken Burns style documentaries etc.

Very fast, small, and with outputs free for commercial use. Full 44.1 kHz stereo quality. Even the very small models for version 3 (2.2Gb + 1.1Gb text encoder) can produce up to two minutes and can apparently generate this in a few seconds even on a CPU.

Very importantly for creatives, the new version offers iterative editing of outputs. You can regenerate just the bits you don’t like (audio ‘inpainting’) and keep the rest. And the speed should make that a relatively easy matter. You can also auto-extend your audio in the same style (audio ‘outpainting’).

Trained on legally clean sources, so the anti-AI mob can’t wail about ‘stealing’.

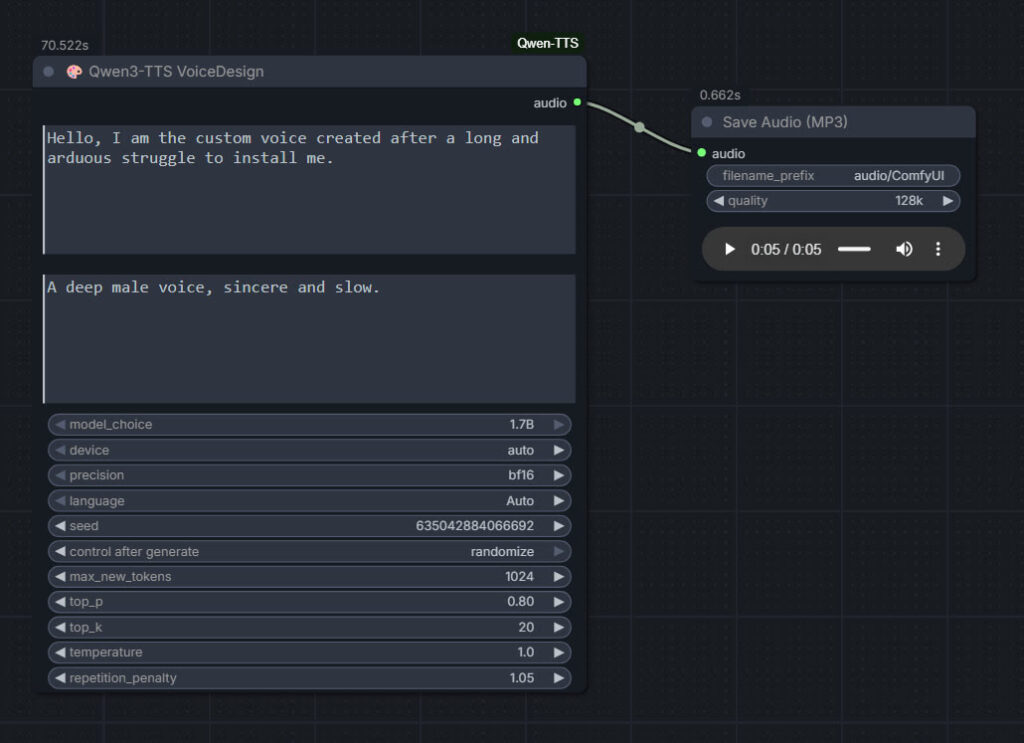

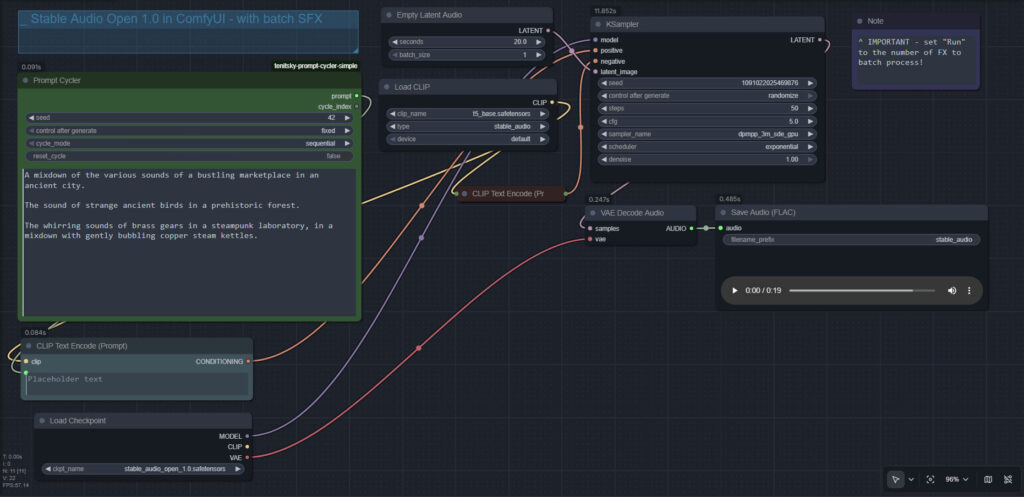

The official release is gated behind a Huggingface sign-in, presumably to guard against vexatious lawsuits re: misuse by miscreants. But the ComfyUI models and encoder are here and freely available: ../Comfy-Org/stable-audio-3. I’m still downloading, but I guess it might then need an update of ComfyUI, re: getting the text encoder recognised? Update: needs to be the latest ComfyUI 0.22 or higher.

What’s currently missing is i) an example prompt structure to generate a complex but coherent sequential soundscape (can JSON be used, with timings?); ii) a working example of how the iterative editing is done; and iii) an optimized ComfyUI ‘studio’ workflow (e.g. for optimised stereo separation and movement, and for running the Small SFX with the Small Music model alongside each other for a basic multitrack in the workflow).

For the previous Stable Audio, note that one could multitrack simply via the prompt. e.g. “A balanced mix between a good field recording of a man walking through dry leaves in winter, and a recording of small birds calling plaintively in the surrounding Canadian boreal forest.” The word mixdown would also work. I assume this will work on the new version 3, once I get it installed and working.

I’m less interested in the music than in the SFX and soundscapes, but if the music interests you then note the new Stable Audio Prompt Guide for Music at their site.