Bagginsbill’s Load and scatter script for Poser. Specifically, it loads a school of 150 fish and places them in semi-random positions within a natural tight distribution.

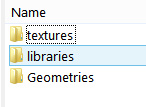

Currently it’s set, via a line in the LoadFishes.py script, for the freebie fish loaded from :Runtime:Libraries:props:TrekkieGrrrl:Fish.pp2 — which is a free Poser prop.

Once the script has spent about 15 seconds making the shoal and returned UI control to you, select Fish_1 and move it, and the shoal will spin and tilt as if it were a single prop.

Tested by me, and it works fine in Poser 11.2. It should presumably also work for fireflies, pollen floaters, asteroids, moment-of-explosion debris clouds (“Hulk… SMASH!”), perhaps even a “distant low-poly star-fighter mega-battle”, etc… provided you load up Notepad++ and change the path to the base prop. For a flock of birds, though, one of Ken G’s paid-for scripts is probably going to be better, re: the required variety of wing-flaps within a flock.

If you need to do something similar but constrained within a container and fully random (e.g. fireflies in a jar), then see my recent test of the free ‘Props in a container’ script.