The Common Crawl service is an open monthly crawl of the Web. It currently weighs in at a whopping 145TB, and is seemingly limited to Web sites with high-ranking inbound links.

How does one discover if an URL is being crawled for Common Crawl? With the Common Crawl Index, an URL lookup tool for Common Crawl. It’s then apparently a fairly easy thing to work down a tree for identification and extraction of, say, just the Wikipedia index segments from the monthly crawl.

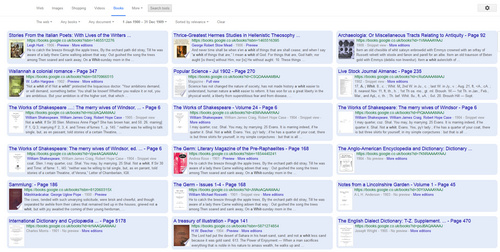

A test with some randomly selected (and rather more obscure than otherwise) JURN URLs suggests that Common Crawl does indeed visit a wide range of URLs. You can see what actual pages on an URL are being indexed by Common Crawl, by replacing the *.URL inside this…

http://index.commoncrawl.org/CC-MAIN-2015-06-index?url=*.jprstudies.org&output=json

In the above instance, the crawler doesn’t appear to going very deep into the heaving bosom of Journal of Popular Romance Studies. To create a version of JURN on Common Crawl the crawler would need to be told to explicitly do a deep harvest on each URL, rather than only collecting pages with high-ranking inbound links. That’s my guess, and it might explain the sparse harvest of jprstudies.org (see above). This guess seemed to be confirmed, when I found a Common Crawl forum comment in August 2015 by Tom Morris…

…given a fixed budget, focusing on crawling entire domains, whether by using sitemaps or other means, will, necessarily, reduce the number of domains which are crawled. Focusing on crawling all structured product data will mean sacrificing crawling popular pages.”

So the JSON output for the above link effectively tells you what your domain’s most popular pages are, as judged by inbound links from quality sources. That, in itself, may be rather useful for some.

What about PDFs? It seems that some PDFs are collected and indexed alongside the HTML. Not many PDFs seem to make it into the index, though. For instance, another forum comment showed a table for the March 2015 crawl, which had 3,111,864 PDFs against 1.6Bn HTML pages. The PDFs that do make it in often appear to be truncated.